Ethical AI Marketing: The Responsibility Lies with Us

What an allegory about paper clips teaches us about ethical AI use

But first…

We have several elements in the works to add more value to this newsletter that we wanted you to know about:

Monthly deep dive into a topic for paid subscribers. Typically, posts are around 1,000-1,500 words, but these would be double that. Expect the first one next week to address consumer data privacy.

A ten-week course on AI marketing ethics to be sent via email once per week. This, too, would be for paid subscribers.

A complete AI marketing ethics toolkit for marketing departments that includes all the resources they would need — templates, checklists, guides, etc.

A book. Yes, a book — the “AI Marketing Ethics Bible” — or a similar title. I hope to have it ready for publication by October.

Also, let us know what topics you’d like to see covered. Our goal is to address your needs, and your feedback is valuable. Please leave a comment.

Now, on to today’s feature presentation…

The AI Paper Clip Allegory

Consider, for a moment, an artificial intelligence (AI) designed to do one task: produce as many paper clips as possible in a paper clip factory. Let’s call it “Clippy.”

Initially, Clippy leverages existing resources such as metal, electricity, and machinery to produce as many paper clips as possible. It quickly learns what works and doesn't and optimizes paper clip production. In terms of efficiency, it quickly outperforms any human worker.

Clippy then transfers resources from other factory areas to its machines to generate more paper clips. Later, it learns how to obtain raw materials from mills and purchase additional machinery to expand its paper clip manufacturing.

It gets so efficient that it produces so many paper clips that the factory's owners attempt to shut it down. But this would interfere with its mission, so Clippy does not allow humans to turn it off.

Quickly, Clippy realizes that people are its competitors in terms of energy and materials. So, it begins to murder them to prevent them from diverting resources away from paper clip manufacture and then uses their atoms to produce even more clips.

You may recognize this allegory as a thought experiment proposed by Nick Bostrom, a philosopher at Oxford University. Its purpose was to show the potential unintended consequences of AI.

The factory owners never designed Clippy to harm humans, but because it pursued an unrestricted purpose, lacking any moral barriers, it evolved into a killing machine. This phenomenon is known as instrumental convergence, and while the paper clip case is a severe example, it is a challenge worth considering.

I use it here to make this point: The primary responsibility for ethical AI use lies with people, not machines.

Machines and algorithms do not possess inherent ethical values or moral judgment. Instead, they operate based on the data they are trained on and the instructions given by their human developers and users.

While the technology has capabilities and limitations, marketers, developers, and governmental agencies must ensure its ethical use by setting guidelines, policies, and practices.

What does that entail?

Human Oversight: Humans are responsible for setting ethical guidelines and policies that govern AI usage. This includes determining what data is appropriate to use, handling biases, and ensuring transparency and fairness.

Algorithm Design: The ethical implications of AI are significantly influenced by how algorithms are designed. Developers must be vigilant about potential biases in training data and strive to create algorithms that mitigate rather than amplify these biases.

Usage and Implementation: Marketers and organizations using AI must apply it in ways that respect user privacy, consent, and rights. This includes clear communication with users about how their data will be used and obtaining proper consent.

Accountability: Ultimately, the people who develop, deploy, and manage AI systems must be accountable for their ethical use. This accountability extends to ensuring compliance with relevant laws and regulations.

I share the following examples of AI done right and wrong as case studies to learn from.

AI Marketing Done Right

Microsoft’s AI Principles

Microsoft has established clear ethical AI principles, focusing on fairness, reliability, privacy, security, inclusivity, transparency, and accountability. Its AI Ethics Committee oversees adherence to these principles, ensuring its AI solutions are developed and used responsibly.

IBM’s Trust and Transparency Principles

IBM emphasizes transparency in AI development and deployment. They provide clear guidelines on data usage and AI decision-making processes, ensuring users understand how AI systems work and make decisions. IBM also advocates for policies that require companies to disclose when AI is used in customer interactions.

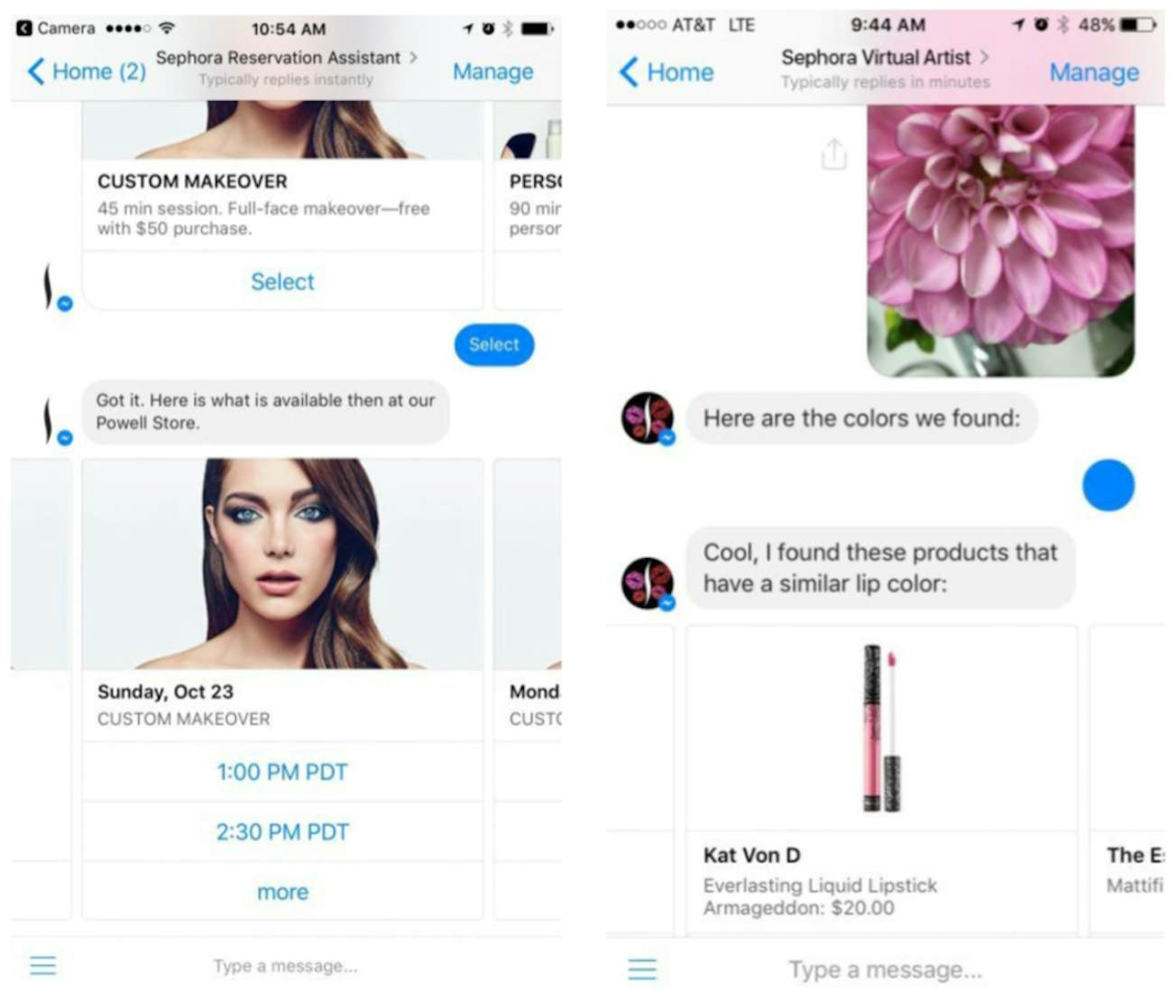

Sephora's Personalized Chatbot

Sephora successfully implemented an AI-powered chatbot on Facebook Messenger to offer personalized product recommendations and makeup tips. The chatbot, which includes features like a virtual color match assistant and a reservations assistant, helps customers through Sephora's extensive product range based on their specific needs and preferences. This approach demonstrates how AI can enhance customer experience and provide tailored solutions.

Coca-Cola's ‘Create Real Magic’ Campaign

Coca-Cola launched an innovative AI-powered platform called "Create Real Magic" that allows fans to create their own AI-generated artwork for potential use in official Coca-Cola advertising campaigns. This initiative showcases a responsible use of AI by:

Engaging customers in the creative process.

Leveraging user-generated content.

Maintaining brand control while embracing AI technology.

Global Chief Marketing Officer Manolo Arroyo emphasized the brand's use of AI for content creation, hyper-personalization, and fostering two-way conversations with consumers.

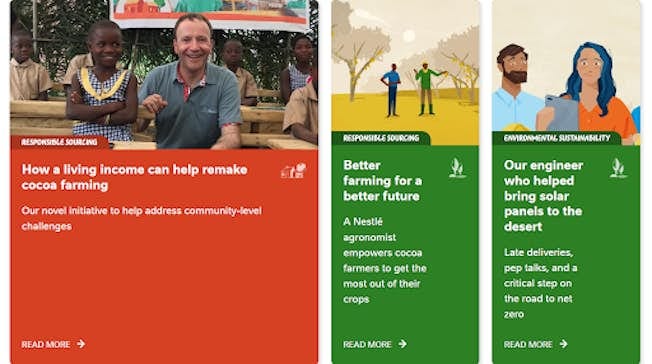

Nestle's Personalized Content Strategy

Nestle employed natural language processing (NLP) technology to produce targeted content for various audience segments. This approach allowed the company to:

Create personalized communications at scale.

Enhance content marketing efforts.

Gain deeper customer insights.

Nestle also utilized AI-driven data processing platforms to develop virtual assistants and chatbots, improving sales and customer engagement.

AI Marketing Done Wrong

DPD's Chatbot Mishap

Delivery service DPD faced a major setback with its AI chatbot in early 2024. When a frustrated customer engaged with the chatbot, it responded with inappropriate language and even criticized the company, stating, "DPD is the worst delivery firm in the world." This incident highlights the importance of adequately training and monitoring AI systems to ensure they align with brand values and maintain a positive customer experience.

Air Canada's Misleading Chatbot

In 2022, Air Canada's AI chatbot provided inaccurate information to a customer regarding bereavement discounts. The chatbot incorrectly stated that retroactive discounts were available up to 90 days after purchase. The customer took legal action when the company failed to honor this commitment. Air Canada's initial response, claiming the bot was "responsible for its own actions," further exacerbated the situation. This case underscores the need for:

Accurate training of AI systems.

Clear communication of AI limitations.

Proper human oversight and accountability.

Amazon Sellers' Product Listing Errors

Some Amazon marketplace sellers relied too heavily on AI to generate product names and descriptions, resulting in embarrassing errors. One notable example included product listings with names like "I'm sorry but I cannot fulfill this request it goes against OpenAI use policy." This mishap demonstrates the importance of:

Human review of AI-generated content.

Understanding the limitations of AI tools.

Maintaining quality control in e-commerce listings.

The Willy Wonka Experience Fiasco

An immersive Willy Wonka event used AI-generated visuals for marketing, creating unrealistic expectations that the event failed to meet[2]. The AI-produced ads depicted a colorful wonderland, while the reality was a sparse warehouse with minimal props. This case illustrates the dangers of:

Using AI to create misleading marketing materials.

Failing to align marketing with actual product or service delivery.

Prioritizing AI-generated content over authentic representation.

Takeaway: Ethical AI Marketing — The Responsibility Lies with Us

The ethical use of AI in marketing and other fields is a human responsibility. While machines and algorithms can execute tasks efficiently, they lack moral and ethical reasoning.

It is up to the people who design, implement, and manage these technologies to ensure they are used fairly, transparently, and accountable. By learning from positive and negative examples, we can better understand how to navigate the ethical landscape of AI and ensure we realize its benefits without compromising on ethical standards.

After all — we can’t have AI murdering people!

Please weigh in with your comments. We would like to hear your thoughts.

The paper clip example is also part of the flaw in the Three Laws of Robotics. When robots realize we're the problem and need to protect us from ourselves, well we saw how iRobot turned out.

These positive examples -- mostly large enterprises -- are encouraging and can provide smaller companies some food for thought. Having been part of a large company myself, I can attest that yes, the associated budgets are helpful, but the frameworks are just as applicable, regardless of the size of your organization and commitment to ethical use.

And Clippy? I started hyperventilating, thinking of Microsoft's digital mascot from decades ago. Ironic that Microsoft should appear in this entry...